"Fancy Autocomplete" Is A Generationally Significant Technology

The mistake isn’t calling it autocomplete. The mistake is thinking that’s trivial.

In Ezra Klein’s recent interview with Jack Clark he was grappling with an AI metaphor:

“There’s still an argument you’ll hear that these are fancy autocomplete machines. They’re just predicting the next token, a couple of tokens make a word — they don’t have understanding.”

“…as my understanding [..] goes, we’re still dealing with some kind of prediction model. On the other hand, when I use them, it doesn’t feel that way to me.”

My brother also asked me this last weekend while talking about GPT Agent Mode - “this isn’t still autocomplete under the hood, is it?”

And I understand the instinct. This feels so much more powerful and so much more significant than simple predict-the-next-word autocomplete.

From Erza again:

“On the one hand, I don’t think these are now just fancy autocomplete systems. And on the other hand, I’m not sure what metaphor makes sense.

Do you still see these A.I. systems as souped-up autocomplete or do you think that metaphor has lost its power?”

I want to suggest that the flaw he’s struggling with in the “just fancy autocomplete” metaphor isn’t the “autocomplete”, it’s the “just”.

Fancy autocomplete is a profoundly significant technology.

Let me explain why.

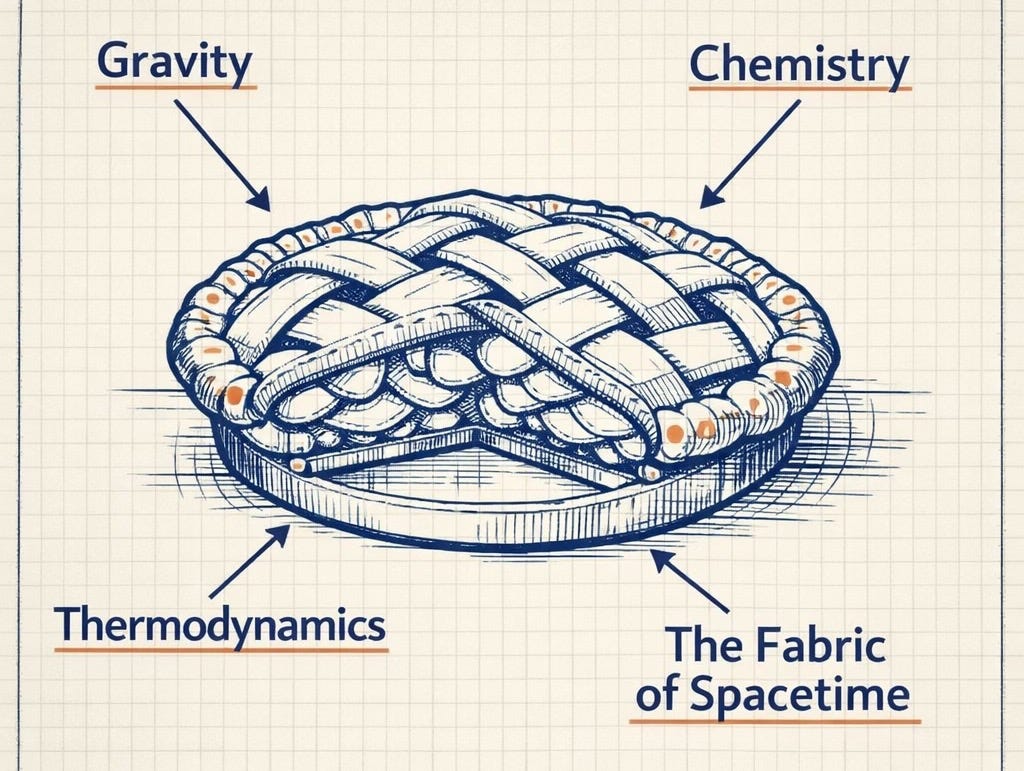

To Make an Apple Pie from Scratch

Carl Sagan has this amazing quote in Cosmos where he he reminds us that:

If you wish to make an apple pie from scratch, you must first invent the universe.

It’s both absurd and profound. I love it so much.

Imagine trying to build a computer that simulates an apple pie.

If you want a fuzzy, approximate simulation, you might only need an image renderer to make a picture of an apple pie. But if you want it to be really accurate, you need accurate chemistry modelling to perfect the texture of the crust and accurate physics engines to simulate the crumbs falling and the heat dissipating.

It turns out that “simulate” can be a very elastic concept, with wildly variable degrees of accuracy.

Carl Sagan’s “from scratch” is equally elastic - it can mean starting anywhere from raw ingredients to the big bang.

Which brings us back to “Fancy autocomplete”. Just how elastic can “fancy” be?

At face value, “predicting the next word” sounds simple. We’ve had phones that could predict the next word for over a decade. It doesn’t sound revolutionary.

But it depends on what level of fidelity you want from that prediction.

Take this sentence:

John’s favourite colour is ___

A very basic autocomplete could just do statistical analysis on a bank of English language text and know that “blue” is the most commonly mentioned colour.

But now what about this sentence:

John’s car is orange. John’s house is orange. John’s cat is orange. John’s socks are orange. John’s favourite colour is ___

If you get autocomplete that can accurately predict that the answer is orange, not blue, what you have implicitly created is a model that contains:

- The concept of “favourite”

- The concept of properties (things having colours)

- The concept of colors as attributes of objects and people

- The concept of “John” as a persistent entity

Now imagine an even richer preceding paragraph describing John’s childhood, his relationships, his plans for the future.

To accurately predict the next word in that context, you need a model that has internalised a vast amount of structure contained within the English language.

This might not be as complex as simulating the entire universe of physics, chemistry, and biology. It’s “only” every facet of language. But language describes every facet of human experience — and every facet of the universe that humans have encountered.

So to build a “fancy autocomplete” that can truly predict the next word at very high fidelity, you are implicitly building a system that encodes a model of everything in the world as humans describe it.

This is profoundly powerful. It’s why AI labs are investing huge sums in GPUs and electricity to train these systems to detect patterns in language at scale.

When people say “it’s just fancy autocomplete,” their mistake is not understanding just how powerful autocomplete becomes when pushed to extreme levels of accuracy.

The Many Applications of Fancy Autocomplete

Where people also go wrong is failing to appreciate the wildly creative scaffolding that researchers and product teams have built around fancy autocomplete.

ChatGPT is a great example.

In raw form, a language model might autocomplete text like a novel:

> “What should I do in Paris?”…

< John asked Sally before his big trip…

Smart people have further trained the model so that the patterns it completes are not just the most likely text in any context, but the most likely text in a conversational back and forth between a human and a helpful assistant.

Through system prompting, reinforcement learning, and product scaffolding, we nudged fancy autocomplete into reliably producing:

Helpful answers

Working code instead of broken code

Structured outputs instead of rambling prose

One Word At A Time

“Fancy autocomplete” is also a useful metaphor for understanding the natural limitations these systems face.

They are only “thinking” one word at a time, so their smallest unit of computation is actually quite limited. To output a 5 word sentence, they first have to output the first word, then re-run the first word to predict the second word, then re-run the first two to predict the third, then re-run the first three to predict the forth, and so on.

This means that a 10 word answer is 10 times more expensive to output than a 1 word answer.

It also means that a short, 1 word answer allows for only 10% of the “thinking” or computation that a 10 word answer does.

And it means that LLMs are naturally trapped by how they start. If the first word is “yes”, then they have to keep going in that direction. They can’t know where they’re headed before they start.

These limits should be severe, but some incredibly smart people keep finding ways around them.

As a funny example, Google researchers recently showed that simply repeating a prompt improves the system’s performance. From <query> to <query><query>.

As in, changing

“Write me a legal letter to argue a parking fine”

to

“Write me a legal letter to argue a parking fine. Write me a legal letter to argue a parking fine”

will, in some cases, triple the accuracy!

One YouTuber called it “The “Stupidest” AI Breakthrough That Actually Works.” It’s all very funny, but it works because with <query> the LLM doesn’t know the end of the sentence while it’s processing the beginning, but with the <query><query> repetition, by the time it starts the request for the second time, it already has already stepped through the whole sentence and has it as context.

There are lots of other (significantly less funny) inventions that get around these limitations.

Companies have developed “reasoning models” and chain-of-thought scaffolding that encourage models to be intentionally verbose. The more words they output, the more computation is happening and the longer the fancy autocomplete has a chance to do smart things.

With several paragraphs of thinking-out-loud behind it as context, the model can then start predicting more useful next words.

The product makers mostly ensure that’s hidden in the background behind a “thinking…” notice, but it also appears in the slightly theatrical writing style you might be familiar with —

“but here’s the twist…”

—isn’t just a personality quirk. It’s a structural trick.

By prompting the model to deliberately introduce “it’s not just this, but that” turns or “but on the other hand” pivots, we allow it to escape dead-end sequences and avoid getting trapped in a single word-by-word trajectory of thought.

In other words, we’re hacking fancy autocomplete to behave more like planning and reasoning, even though under the hood it’s still just predicting the next word.

The same is true of agents.

When we build agentic systems, we are scaffolding language models so that the “next words” they predict correspond to:

- Tool calls

- Instructions

- Research plans

- Task decompositions

Not unlike <query><query>, chaining together models to feed the output of as input to the other, a chain like <plan draft><plan revision><suggested tools><actions> allows the last step to have all the context it needs to take action.

The system feels like it is reasoning, planning, and acting. But the engine beneath it is still autocomplete — just contorted into extraordinarily powerful and useful shapes.

Fancy Autocomplete, Taken Seriously

If you want to make accurate predictions about how this technology will evolve and how useful it will become, the right mental model is not to dismiss autocomplete — but to take it more seriously.

Autocomplete, taken to absurd levels of accuracy, is not trivial. Being able to predict the next word across a vast and arbitrary array of human contexts takes a feat of effort almost as deep as Carl Sagan’s apple pie.

If you wish to autocomplete next word, you must first understand the universe.